It is quite a known pattern to use Azure Stream Analytics (ASA) to create data pipelines to store ingress IoT data to an output location be it a SQL Server or EventHub or Azure Storage etc. This is all the more important when push ASA to the edge and use that as a data transformation and storage orchestration engine esp. with SQL Server on local on premises being used like a Historian. This seems to a straight-forward process but here comes a small catch and technical/product limitation of Azure. It took us a few precious hours to understand this aspect.

Short Answer (if you have scrolling like me):

“If you want to connect ASA with SQL server, ensure that you have a trusted CA certificate with proper certificate chain installed in the SQL server VM”.

For the patient ones who need the backstory 🙂 read along..

What were we doing?

We were trying to wire up an Azure IoT edge module with a SQL server on a VM! This seemed quite easy as per the documentation but I ended up with a curious certificate error.

As a troubleshooting step, I tried to create this on ASA on cloud and connect with the same SQL server on VM to rule out any Edge VM certificate issues. This should be quite quite simple if we follow this blog.

https://docs.microsoft.com/en-us/azure/stream-analytics/sql-database-output

No big deal. So you thought.

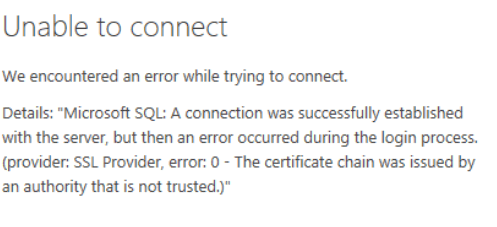

But, I still got the famous certificate chain error.

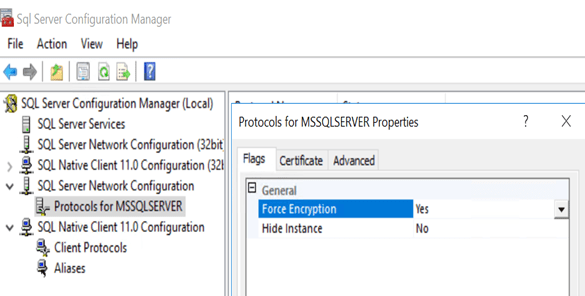

So I started doing the below documented steps

Using Encryption Without Validation – SQL Server Native Client | Microsoft Docs

- Set Force Protocol Encryption Client Setting to Yes

- For secure connectivity, ensure that the client and server both require encryption. Also ensure that the server has a verifiable certificate, and that the TrustServerCertificate setting on the client is set to FALSE.

Created self signed CA certificates and installed them as well. But then still the issue seems to be coming back and back.

Solution

Finally, we found out from Microsoft product team that we need proper CA certificates with certificate chain from well known authority to make ASA and SQL work together.

One requirement for SQL server on VM as output to work is that the SQL server needs to be configured with an SSL certificate issued by a trusted CA. There is no workaround with this. You can’t use a self signed certificate or use TrustServerCertificate=True and change SQL Server settings.

1- Regarding SSL Certificate – Make sure to use the DNS based FQDN for the CN. Here are the full requirements listed.

2- SSL Setup in the VM – Follow steps here. If using SQL 2016 , Also put the certificate’s thumbprint in the registry key mentioned in the “Wildcard Certificates” section.

Now for me who is just doing a dev setup and doesn’t have the luxury of client CA certificates, there are quite limited options.

For IoT Edge, I used a custom .NET code with SQL DB client to communicate with SQL server VM using the TrustSeverCertificate = True flag in connection string for dev code until I get a CA cert.

But for Azure Stream Analytics PaaS service, we can’t enter connection string. So there is no way to enter TrustSeverCertificate=TRUE during development. Sure seems like a restriction.

One another way which is to use services like Let’s Encrypt and generate a chained certificate for your use temporarily. Something which I am yet to try. I think that should work.

If you have used that and worked, please let me know in the comments.

Back to Sensing, Streaming and Storing..