Somewhere during the first half of 2017, our team was experimenting with Unity 3D based applications for Field Service maintenance scenarios. It brought together many technologies, some of which were in its nascency. Solution contained Mixed Reality application on HoloLens with a Digital Twin of a machine, AI to detect the machine, dynamic data rendering, contextual IoT data based on that machine, Skype calling with annotations, multiple people and multi device collaborations, action system to take in voice and gesture commands, integration with Microsoft D365 and warranty management on blockchain.

Quite over the top you may say. This was much before the Microsoft’s out of the box Field Services application like Remote Assist was released. And, my role was to ensure that we harmoniously blend the latest and greatest technologies to create a useful solution for Remote maintenance and field services.

Each of the technologies we had brought together to solve complex maintenance issues were independently interesting and collectively captivating. We went ahead, created a solution with some quirky nuances and applied for a patent during October of 2017.

It was an idea whose time had not come. It would later be called as “Metaverse“!

American author Neal Stephenson in his 1992 science fiction novel Snow Crash first called the concept in which humans interact with each other in a virtual reality based world, the next internet aka “metaverse”.

We understood that it is one thing to do a PoC and thought leadership exercise but totally different ball game to bring so many technology mashups without confusing the user and having real life adoption.

So what was that we built vs what are we talking about now!

For that, I guess you would need to recall the narrative we had about Internet of things a few years back. We always had sensors at home and industries, various protocols, ability to issue commands, programmable devices etc. What was so different about Internet of Things anyway? The common explanation was, it was the maturity of those new and independent technologies to interoperate, collect, ingest, make sense, predict, prevent, act, through edge and cloud, and the scalability of this platform. This overall maturity and the cost benefits, enabled new use cases to be envisioned. Today, we are ok with sharing our heart beat with technology companies, and as operators of manufacturing plants ready to share sensor heart beats (and data) with platform vendors, securely, in the hope that it will help predict and prevent failures!

Argument is the same.

While the technologies we used in our patent pending innovation of 2017 were similar to what we see today, all of them have attained a higher level of maturity. Massively parallel multi player video games on virtual realities, GPU enhancement, web3, NFTs, collaboration stack, secure data shareability, avatars, robust decentralized data systems, advance networks, 5G speeds, AI generated personas and deep learning and the likes, lower cost of entry, all come together, to bring that “new brave world”, where we can exist in a parallel world – both professionally and personally.

The different components have come under an easier name to tell the concept to everyone. But,

“We were learnt the difference between a Metaverse vs metAverse Application!”

the hard way.

The less spoken magic word is maturity of technology and experience. Fast forward to today, these 2 may seem commonsense terms, considering the cycles of websites, apps, OSes, mobile devices, tablets that we have been through. But, come metaverse, we will be grappling with another maturity curve where this will be repeated.

Before 2017:

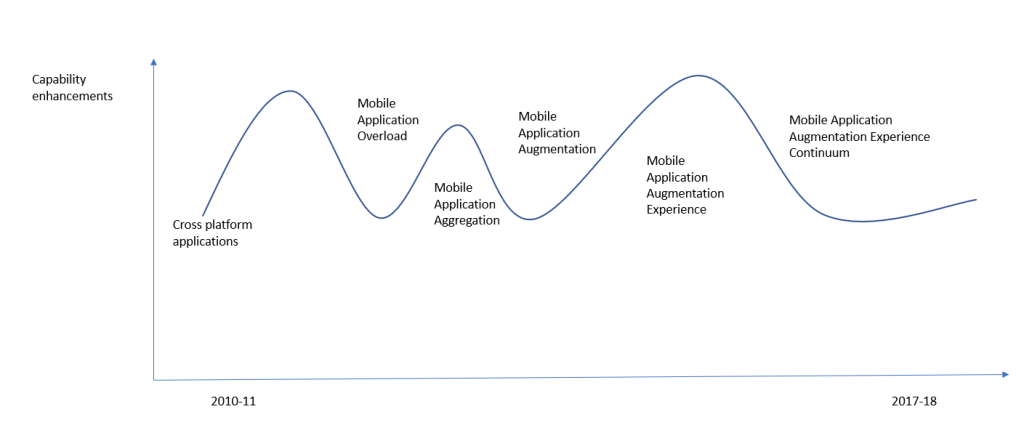

I have been leading mobile applications and its experiences over a variety of platform building applications for enterprise clients. Starting off with Symbian and Qt apps, I transitioned to Microsoft, Android, iOS app store world. This journey would roughly be like the below graph where we tried unifying technology for development but still had native platforms to competing to create rich apps. Soon, we had too many applications fighting for our constant attention. Enterprise users were struggling to find their apps amidst the BYOD era. Some had work and personal phones separated out to manage this distraction. Eventually, we needed a single phone but then people started asking application aggregation on phones to avoid noise and security. In a few years, we discovered new features that could be augmented in phones – Gamification (one of our earlier patent for mobile gamified experiences), Siri, Cortana, assistants, Augmented Reality, advanced sensors on phones and we had a gamut of deep applications which were irreplaceable. Most became fancy features, until we realized that Application augmentation needed to have an experience that is worth the recall or monitory value. Thus finally we started looking for the holy grail of experience continuum, where there is smooth handoff between new features and new devices from the existing.

Note: Meanwhile, gamers kept playing multi player games on all form factors 🙂

This realization perhaps gave me Experience Aversion towards ill conceived continuums. I believe that is what we have to understand in this new wave as well. Else, our rush to create metaverse experiences will rather become aversion for the same experiences we fondly construct, making it MetAverse!

Experience abstraction and designing the continuum..

My experience building the Field Services app was based on these learnings where we tried to segregate human experiences.

Sensory Experiences in general –

Visual, Audio, Olfactory (smell) and Tactile, Neural – how we are going to get augmented by these?

- Visual is easy – AR/VR/MR – By now, most of us have experienced this from 360 immersive videos to VR to AR to MR across various form factors and devices, tethered and untethered experiences.

- Audio – Aural AI – I still think this is much more powerful that what we have experienced so far. Why? I believe this is one of the only distractions that a human brain allows while doing another task – typing , walking, running etc. True multi tasking can be achieved when you are listening to something repetitive like classical music along with your work or something interesting like audio book while doing a chore like folding clothes. Much of my thoughts in this area has been inspired from the movie HER . At some point in future, AI agents like Siri and Alexa will break out of the existing imagination into much more pervasive systems.

- Olfactory and tactile – are touch and smell based systems – While not much used, this was researched and embedded into our demonstrations as fingerprint sensors, magnetic and electric vibrations to understand cloth textures remotely.

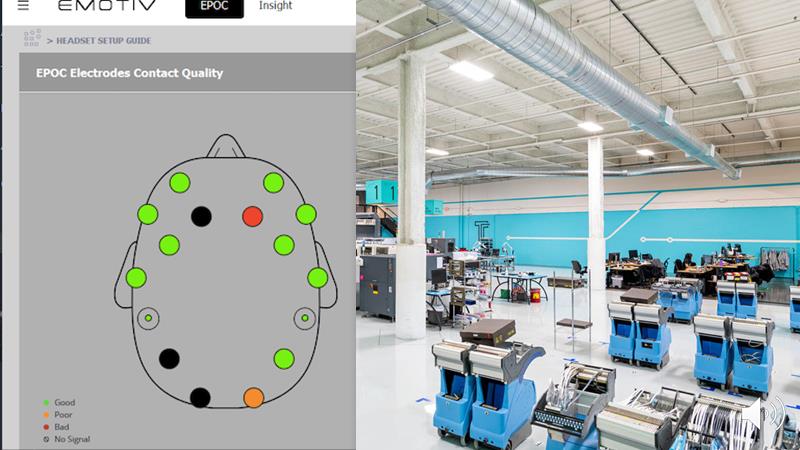

- Neural – using devices like Emotiv, sensors which could approximately understand human actions and emotions could one day help create better systems was what we had researched. We tried to create synthetic experiences based on actual experiences. While devices we had were quite evolving, our ideas were to understand inherent human behaviours during mundane, repeated tasks like electronics repair.

These design experiences had to be compared in the context of below 4 use case archetypes

- Product Design

- Maintenance Jobs

- Learning & Training Sessions

- End Customer Experience

for every single persona, be it design engineer/s in a product design team working on a CAD model OR a maintenance staff learning about part disassembly and assembly to build muscle memory OR a customer experiencing a custom dress before an in-store experience.

Put all of these together and you will get a matrix like the one below.

Such objective vision of subjective experiences, helped us understand which experiences are good during what phase. what may cause more distraction, helping us understand the VR and MR’s role in Designing products, Training employees whereas VR and AR’s relevance for (B2C) customer experiences.

I suggest a Metaverse enthusiast to really understand the nature of the application, personas and the human experiences really well to create meaningful experiences. The technology stack that define a metaverse application like VR, GPUs, networking devices, AI, web3 etc. will mature eventually to create that seamless continuum. This seamless continuum is a 3 Dimensional – Experience continuum, Application continuum and Technology continuum.

Words of Caution

Trilok R | September 2022

In our pursuit to create a superior metaverse application which is not experience averse, we shouldn’t push our unsuspecting users into a dystopian hyperreality.

Next: Industrial Metaverse, Digital Twin, Virtual Twin – Beyond the narrative..

One thought on “Moving from Met-averse to Metaverse Apps..!”