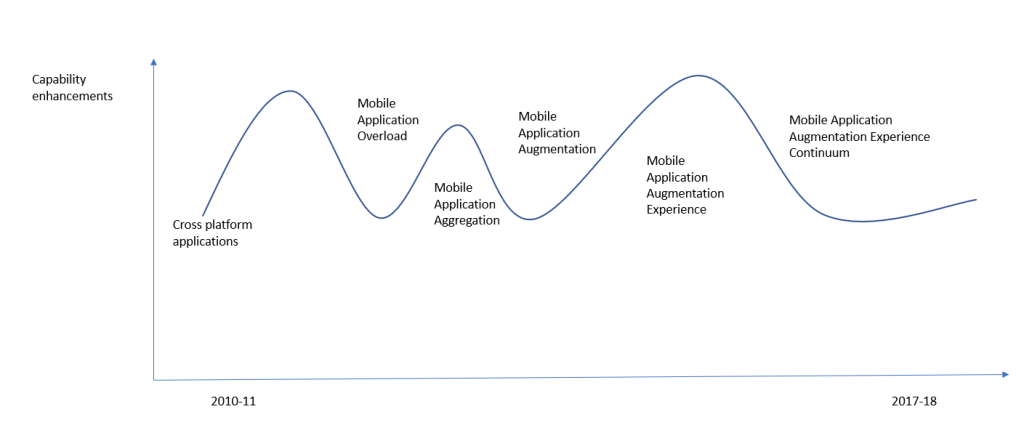

Much has been written about Metaverse over the last few months. So called the “New Internet” or the “New Application Experience” has become a part of our extended lexicon more that what we may want, but, the truth is, there many more hurdles before it becomes an indispensable part of our daily life, like mobile apps and websites. Perhaps that is why Gartner marked it a good 10 years away from becoming part of our behaviours.

From a component architecture perspective, there are a lot more moving parts to this stack. Yet there is one specific aspect of this new concept that deserves attention – The Industrial Metaverse. This is spoken in conjunction with IIoT, Industry 4.0 and 5.0 with much fervor.

Just like me, you may wonder, we always had digital twins and what is the new angle here! Let us understand a few things before we peel the architectural onion of Industrial Metaverse.

Everyone has been talking about – “let us build a Digital Twin” quite synonymous with metaverse and plain simple 3D model visualization of machines and plants, which is half the truth. A good looking, interactive 3D model or an ability to show some data on top of 3D models or a simulator with 3D models attached is not a Digital Twin! At least, I don’t subscribe to that definition.

How do I see the Industrial Metaverse forming? - From an evolution of Virtual models and OT technology solutions.

Product Engineering teams always had high resolution, detailed 3D models of various formats, with companies like Dassault systems giving good workbenches to import, build and simulate pre-created scenarios in order to test faults on these machines – Virtual Twins. These concepts started moving further into plants and processes with the question – why are we not using these models to monitor actual plants and their operations. But then they were siloed with singular models from the vendor platforms whereas the processes definitely sat outside of those platforms in the plant ecosystem. They had sophisticated simulators but then they were not a software representation of the actual plants, assets and processes. Also, it didn’t work hand in hand with other vendor machines and their simulators. Most importantly, real data could never stream into these workbenches.

On the other side, manufacturing execution platforms and hyperscaler platforms like Azure and AWS were wondering about helping factories and plants with real time representation of their shop floor data, securely, at scale – to model assets & process, to predict events, to prevent the scenarios using Industrial Platforms. They were expanding their plant or cloud knowledge moving from OT to IT, or IT to OT.

Value chains were getting extended. Both of them were searching for a / set of software services to bridge this chasm of real time data and process handshake, each partnering or building full stack solutions.

Note: All the while, manufacturing plants were looking for value and not expensive technologies!

Let us call the middle region as Digital Twin – a software representation of the assets, processes, and behaviours that memoise the system, contextualize data inside the system, and raise events based on the connected behaviour of system workflows, like a point in time. I often call this as the working memory of the system with a limited playback capability. This crucial component adds to the real time nature of the system in between.

This Digital Twin is the secret sauce to having a good Virtual Twin on the top and a low latency (autonomous or condition based) control feedback to Industrial Platform below.

Paraphrasing one of the Siemens executive’s statement (will be attributed once I figure out the details)

Consumer metaverse may not need real time data but industrial metaverse needs real time data to succeed.

Hence, the platform in the middle with the bindings between the layers should bring the real time data view of the plant / factory / industry to the virtual world seamlessly (making the metaverse possible).

You could see from the earlier rough sketch that there are broadly 2 interfaces needed to make this happen. One that elevates the virtual twin experiences to make it closer to Metaverse experience continuum mentioned in the earlier blog of mine. And the next, of getting real time information from the OT / physical ecosystem within factory. These 2 are integral to changing the current status quo of plain 3D models with data to a metaverse application.

- The Visualization Interface for Metaverse Apps

Virtual Twin with the Digital Twin binding is what creates the entire universe of Metaverse Apps which are highly responsive, rendering of the plant that enables us to seamlessly access a particular machine in a plant and troubleshoot remotely. Definitely that needs infrastructure support like 5G and 6Gs but also some like of spatial software support for seamless collaboration and space sensing. The component architecture I can think of is Microsoft Mesh. I don’t want to repeat Microsoft documentation here.

I believe that the link above gives a very good idea of how that platform should evolve to give the seamless B2B2C experience that I was mentioning in my previous blog. It talks of the immersive presence, spatially anchored maps, rendering acceleration, multi user shared experiences, streaming data, security, an Out of the Box SDK that enables application layer acceleration with the data binding from the API layer below (digital twin layer).

I would like to add that, this experience should be as simple as listening to Microsoft Teams on mobile MS Teams App and transferring the call to a desktop MS Teams application with just a simple button click, without any latency. Also, in the context of Industrial Metaverse, this would be much easier to setup as you can have dedicated infrastructure like 5G, Active Directory based secure logins inside wearables, controlled devices, limited number of users simultaneously logging in and the like. This can ensure that we guarantee similar infrastructure at network, connectivity, device, security layers with ample governance like today’s internet and the devices used to access information from within a manufacturing facility.

2. The Data Interface for Industrial Data

With numerous firewalls and air-gapped networks from factory floor to internet, this was one is a tougher interface. Good news – This is almost a solved problem, thanks to many of the private and hybrid networks, high security interfaces, localized secure processing on purpose made hardware, limited data exposure, one way communication that avoid command and control interfaces etc. This limits the exposure of OT, at the same time gives as much data and processing needed, at speeds and latency acceptable to populate the Digital Twins in the cloud middleware. Also, the fact is that many of the hyperscalers have software runtimes and stack that start from the cloud and extend to the edge within OT. MES systems are upping the game with their stack starting from the Edge synchronizing to the cloud. This gives opportunity to run reactive, predictive and preventive workloads within Edge and cloud, with visualization and actions that can go back and forth Physical Space and Digital Twin.

With these 2 interfaces maturing, you can possibly have the Industrial Metaverse sooner than a Consumer Metaverse. May be it is already here.

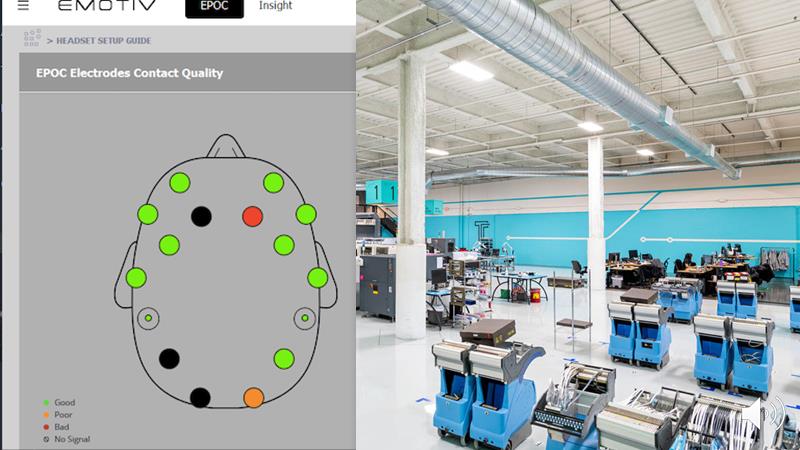

There are a lot of innovative solutions from PTC, Azure, AWS, Siemens etc. their numerous partners and many Solution Integrators etc. showcased and deployed to clients. Many such light solutions, or wannabe Metaverse solutions are already PoC-ed or used at Industrial facilities, for example, check out this build session. towards the 20th minute. This detailed Microsoft blog (image below) with examples give the full stack view of their Industrial Metaverse. Here, the digital twin layer, abstracted in my blog, holds the Azure AI and autonomous systems, Synapse Analytics, Azure Digital Twins together to build the middle stack. Azure IoT is the Data Interface for factory, Microsoft Mesh is Visualization Interface for Metaverse Apps built on HoloLens like devices. Power Platform is part of the Digital Twin layer but the application interface called PowerApps is still in Metaverse. I am sure an expert on another stack will have something similar.

As per the case studies presented here, helping us build simple digital representations, virtual worlds, autonomous self correcting systems and more.

To summarize, I believe the Industrial Metaverse is already here in many forms, in its many light weight avatars.Our joint effort should be directed towards creating the right experience continuum within the use cases, to create tangible and usable solutions for the end personas helping them – to remotely collaborate, to improve the first time fix rates ,to visualize the impact of predicted changes, to build muscle memory of operators and the like.

Disclaimer: Author works for Accenture and uses Microsoft services to architect and build Manufacturing platforms and Applications on top of it.

Media Images generated by DALL.E 2

Previous blog – https://3logr.com/2022/09/27/moving-from-met-averse-to-metaverse-apps/